New innovations have the potential to help virtual sellers interpret customers’ emotional reactions. But how close are these technologies to sealing the deal?

Salespeople are great at reading faces. They need to be. After all, most of them are familiar with the “7-38-55 Rule.”

This psychological theory holds that during a conversation, we ascribe most meaning (55%) to the other person’s expressions and actions and pay some attention to their voice (38%). The listener receives a mere 7% of the message from the speaker’s words, according to the theory. In other words, nonverbal cues and body language really do count. And that’s a challenge for today’s salespeople. Since the pandemic began in early 2020, the industry has taken a hard turn into virtual selling. And it’s much harder to gauge the efficacy of your sales pitch when you’re addressing a collection of pixels on a computer screen.Mick Jackowski, Ed.D., Marketing professor at Metropolitan State University of Denver, has been studying how recent advances in sales technology such as artificial intelligence and biometrics might help salespeople read clients better during remote meetings. He’s also embracing these new technologies in his classes as a way to prepare students for an ever-evolving field.

“Research shows that remote meetings are only 80% as effective as in-person meetings,” Jackowski said. “So our challenge now is to discover ways to make up that lost 20% within a virtual setting.”

This year, Jackowski’s search for answers took him all the way to Helsinki.

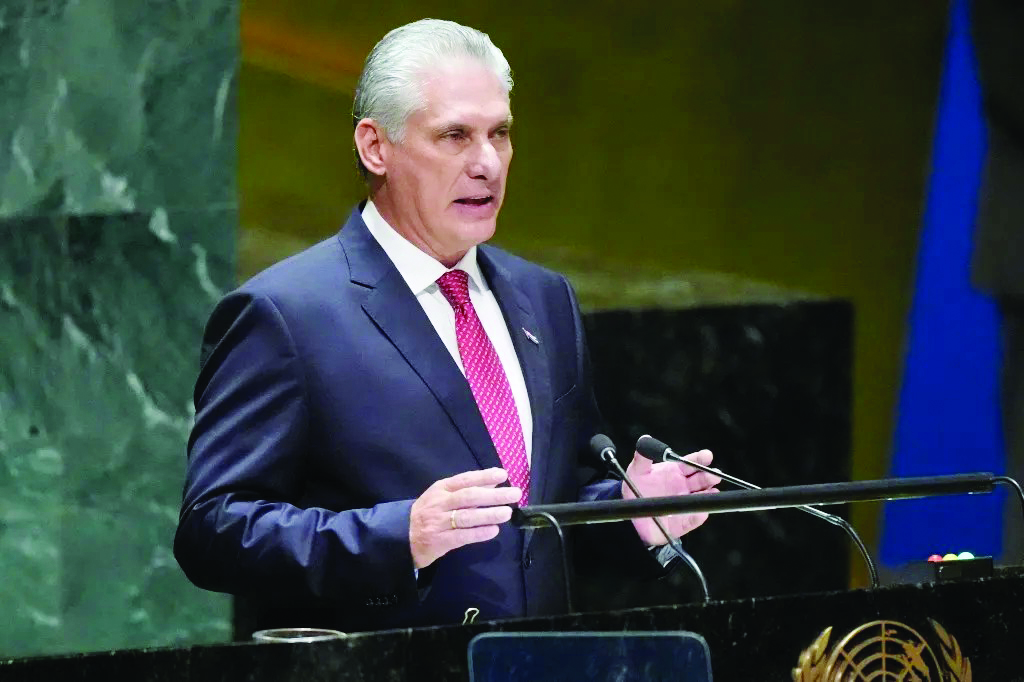

After winning a Fulbright U.S. Scholar Award, he spent six months at Haaga-Helia University of Applied Sciences, home to the world’s most advanced university-based sales laboratory. The lab incorporates AI and biometrics (the science of tracking and analyzing people’s unique biological characteristics) into its approach.

The highly sophisticated technology has helped the Helsinki lab’s research add scientific rigor to the truism: It’s often not what you say but how you express yourself while you’re saying it that counts.

Reliable readings

The Helsinki lab specializes in identifying such nonverbal cues and establishing whether a speaker’s words complement or contradict their thoughts and feelings.

How does it work? During interviews, the lab technicians use equipment, such as sensors and cameras, and software to track four physical characteristics: facial expressions, eye movement, galvanic skin response (sweating) and brain waves.

“By closely analyzing the subject’s physical responses during a conversation, assisted by AI that uses computer vision technology, the technicians can then provide accurate feedback about the emotions the interviewee is experiencing,” Jackowski said.

That means if someone is anxious but says they’re having a great time, the readings will reliably point to “anxious.” Jackowski described the system as “incredibly perceptive.” But here’s the rub: To get such reliable readings, an entire laboratory must be set up, which costs around $20,000. And a full-time specialist is needed to attach the equipment and analyze the data.

That would not be practical for Jackowski’s specific area of interest: virtual meetings. In fact, the only biometric tool suited to monitoring remote meetings is facial expression analysis. So the professor tested whether that single tool might yield similar accuracy. Sadly, it did not.

“I really hoped we could interpret what the person on the other end of a virtual call was feeling purely through their facial expressions,” he said. “But the technology just isn’t there yet.”

Source: red.msudenver.edu